The Sound of Strymon: Sound Designer Pete Celi

Strymon co-founder Pete Celi is responsible for the sound design and DSP algorithm creation for all the Strymon pedals that use DSP. Recently I had the

Free US Shipping On Orders Over $49

Easy 30-Day Returns

Financing Available Through ![]()

Rack-mount digital delays of the ’80s ushered in a new era of audio effects. They generated the cleanest delays yet to be heard, but also created their own special and intriguing sonic characteristics. Our thorough investigation of digital delay technology reveals the unique personalities that these delays possess.

Rack-mount digital delays of the ’80s ushered in a new era of audio effects. They generated the cleanest delays yet to be heard, but also created their own special and intriguing sonic characteristics. Our thorough investigation of digital delay technology reveals the unique personalities that these delays possess.

The result of our research and development are the sounds and technology found within DIG Dual Digital Delay. DIG unearths the true soul of digital delay and doubles it—two simultaneous, integrated delays with rack delay voicings from the 1980s and today, for incredible expressive potential. Delve into DIG’s three voicings: the early ’80s adaptive delta modulation mode, the mid-’80s 12 bit pulse code modulation mode, and the modern high-resolution 24/96 mode.

Pete Celi, Co-Founder and Sound Designer illustrates the research and sound design process in the White Paper below.

In the late 1970s, integrated circuit technology had reached LSI (large scale integration) status, which gave rise to reasonably affordable, large capacity digital memory chips. This allowed for the possibility of digital delay effects that could reproduce the input signal with no degradation, artifacts, or coloring—things that were all considered shortcomings of previous magnetic and analog technologies. So, what makes one digital delay sound different from another? What gives a digital delay a ‘personality’ that is unique? Shouldn’t they all sound the same?

The key to individuality in those early delays was not in the memory chips that created the delay, but rather in the manner in which the signals were converted from analog to digital

(and back) in order to make use of those memory chips. Different methods required different supporting circuitry to increase audio performance, and that also played a part in differentiating one digital delay from another. Let’s take a look at a couple of different techniques used in the earliest available rack-mount digital delays.

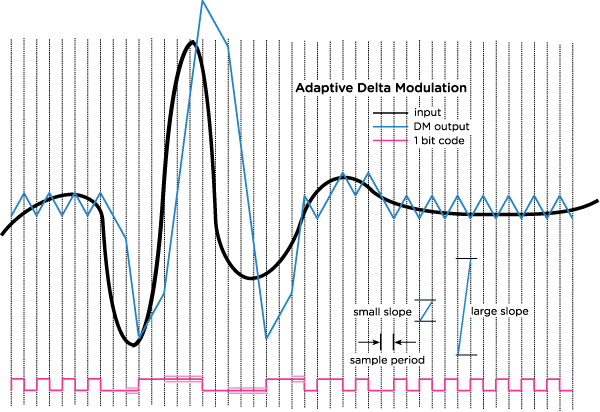

Adaptive Delta Modulation (ADM) is an extension of Delta Modulation, a conversion technique that originated in telecommunications. Delta Modulation converts an analog signal into a 1-bit digital signal by using a fixed slope to approximate the signal at each sample point. If the input signal is greater than the slope representation, a “1” is assigned, and if the signal is less than the slope signal a “0” is assigned.

This works fine for voice bandwidth telecommunications transmissions, but lacks the fidelity required for dynamic full-range audio. High frequency and/or larger transient input signals result in ‘slope overload’ where the output signal can’t rise or fall fast enough and produces high distortion products. With low level or low frequency signals, excessive noise is present. Notice above how the detail at the latter part of the input signal is completely lost, and the high- peak transient is poorly represented.

The ‘adaptive’ part of ADM takes the Delta Modulation concept and assigns a weight to the bit based on the input signal. This allows for better tracking of transients and a reduction in granular noise. Shown below is a simple adaptive process that uses a smaller step size, but assigns a larger step size when slope overload is detected, signified by three consecutive 1’s or 0’s in the digital signal. In practice, adaptive algorithms are more sophisticated than this to allow for further improvements in both transient performance and residual noise levels.

Figure 2. Adaptive Delta Modulation

Figure 2. Adaptive Delta Modulation

Another important aspect is the clock speed or sample rate, which is generally 250Khz or higher. Relative to the signal shown above, the sample period would be much smaller than what is depicted, greatly improving accuracy. The attraction to this conversion technique is it can be implemented very inexpensively without the need for monolithic IC converter chips. The challenge is to find the proper balance between transient high frequency tracking, and low- level noise performance.

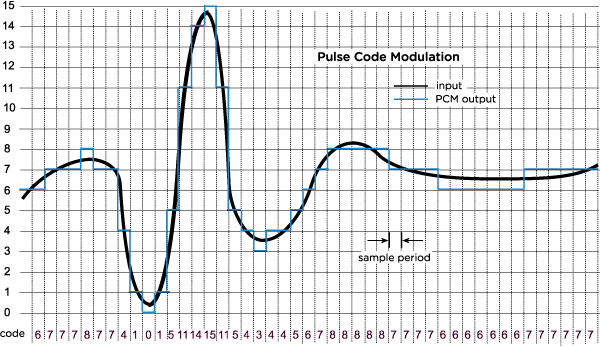

The appearance of monolithic DACs (digital to analog converters) conveniently packaged into a single IC chip led to affordable solutions that could provide good audio specs that were independent of signal dynamics and frequency, but were determined by the sample rate and the bit depth of the converters. Sample rates were set to the lowest possible frequency, typically 32KHz, to squeeze the most delay time out of the memory chips while a bit-depth of 12 bits offered relatively high audio performance.

Figure 3. Pulse Code Modulation

Figure 3. Pulse Code Modulation

The signal amplitude range is split (quantized) into a number of steps based on the bit depth. The above shows a 4-bit system that would produce 16 step values. The bit depth determines the noise level of the conversion process, known as quantization noise. The maximum audio frequency that can be represented is Fs/2, or one-half of the sampling rate. This solution is attractive because the audio performance of the conversion is not dependent on tuning or algorithm development.

The design choices made to squeeze more audio performance from these techniques play an important part in the overall sound and feel of the delay.

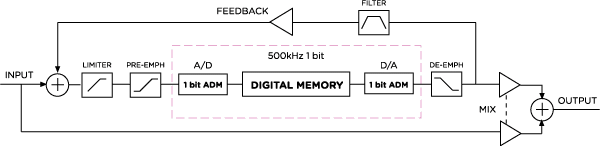

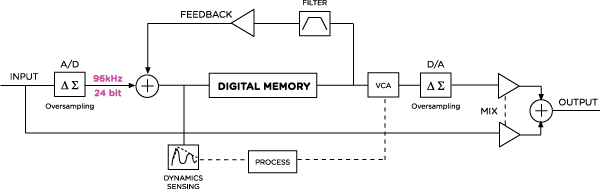

The supporting circuitry of ADM conversion systems is designed to limit transient spikes and to reduce residual noise with low-level signals. The use of a limiter before the digital conversion would tame input spikes and reduce the occurrence of slope overload conditions. To combat residual granular noise, a pre-emphasis EQ boosts the high frequencies prior to conversion, while a reciprocal de-emphasis after the conversion reduces the high end, restoring a flat signal response and reducing the granular ‘hash’ noise introduced by the conversion process. The resulting delay block diagram would look as follows:

Figure 4. Strymon DIG ADM Circuit

Figure 4. Strymon DIG ADM Circuit

Sonic Results

While retaining high quality repeats with lower level signals, the ADM algorithm displays its personality in the form of reduced fidelity with higher-level high-frequency inputs. This architecture produces a delay that enhances the percussive nature of transient attacks that are subtly fattened by the limiting circuitry and given further color by the ADM process. This also lends a sense of depth to dynamic inputs and makes for an excellent choice for rhythmic delay sounds.

In DIG, the artifacts generated by the ADM conversion are created and preserved with an internal 1-bit, 500KHz process rate in the A/D → Delay → D/A blocks. Limiting, pre-emphasis and de-emphasis are performed with 32-bit floating point precision at 96kHz.

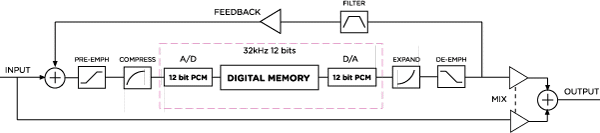

To maximize the performance of a PCM system, the input signal must be as large as possible, making the residual quantization noise small in comparison. This is achieved by the use of a companding system, in which the signal’s dynamic range is compressed by amplifying small signals before the conversion process. When converting the delayed digital signal back to analog, an expander reduces the low level signal back to their original level, and also reduces the accompanying noise floor in the process. For even further improvements, a pre-emphasis/ de-emphasis is also employed as shown below:

Figure 5. Strymon DIG PCM Circuit

Figure 5. Strymon DIG PCM Circuit

Sonic Results

The conversion process and supporting circuitry combine to create a digital delay that is initially clear with a sense of warmth and dimension. Delays with high repeats gradually turn into a soft, ambient wash of sound.

In DIG, the artifacts generated by the PCM conversion are created and preserved with an internal 12-bit, 32kHz process rate in the A/D → Delay → D/A blocks. Pre-emphasis, compression, expansion and de-emphasis are performed with 32-bit floating point precision at 96kHz.

In the decades that have passed since the first available digital delays came out, many advances in IC scalability and precision have been made, as well as new techniques to greatly improve the accuracy of the A/D/A conversion process. Twenty-four bit converters are affordable and routinely have audio specs 100 times better than those earlier offerings. This allows the creation of digital delays that don’t require supporting circuitry to enhance the quality or overcome shortcomings inherent in the conversion process. This is a welcomed improvement in signal reproduction, and allows a platform for the re-creation of those earlier technologies and the characteristic attributes that those circuits produced.

DIG’s 24/96 algorithm uses the high-resolution conversion to create a digital delay that faithfully recreates the input signal, while employing a subtle dynamics block that allows the delayed signal to sit under the dry signal while you’re playing. All internal processing is done with 32-bit floating point precision at 96kHz.

Figure 6. Strymon DIG 24/96 Circuit

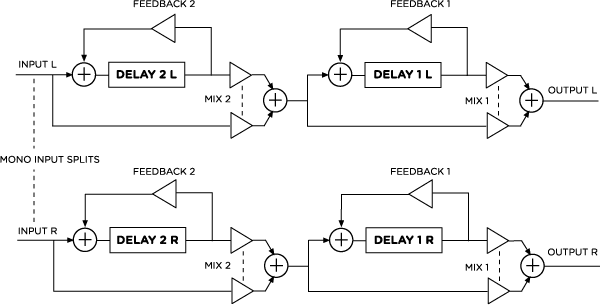

DIG features two true-stereo delays that allow for many creative, rhythmic and ambient delay effects. Delay 2 is a synchronous subdivision of Delay 1 by default, and can be set to run ‘free’ if desired. They can be combined in three different manners to further enhance the possibilities.

In Series configuration, Delay 2 (the rhythmic sub-delay) feeds Delay 1 in the following manner:

Figure 7. Strymon DIG Series Configuration

This is equivalent to putting two single delay pedals in succession on your pedal board. A mono input feeds both left and right channels.

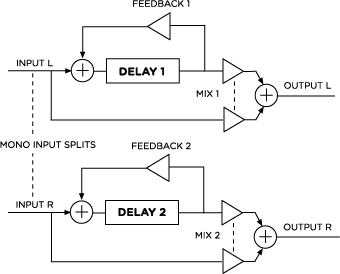

In Parallel configuration, the delays do not interact with each other, but produce their outputs ‘side-by-side’ as shown:

In Stereo, Delay 1 outputs to the left channel, and Delay 2 outputs to the right channel. When the right output is not used, the wet signal sums to mono so that both parallel delays are heard from the left output.

Figure 8. Strymon DIG Parallel Configuration

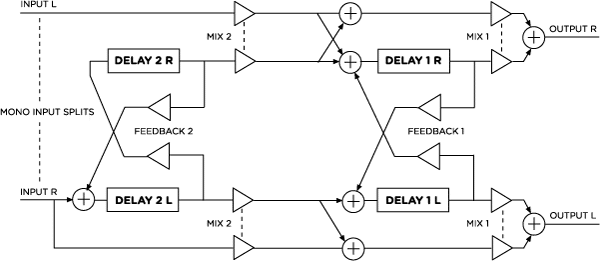

In Ping Pong Mode, the delays are configured in a series ‘ping-pong’ configuration. When using a mono output signal, this configuration is the same as the Series configuration. In stereo, each delay acts as a ping-pong delay, and they interact when both Mix knobs are turned up.

Figure 9. Strymon DIG Ping Pong Configuration

DIG’s dual delay architecture, flexible routing options, and classic digital delay voicings allow vast sonic possibilities.

Subscribe to our newsletter to be the first to hear about new Strymon products, artist features, and behind the scenes content!

Strymon co-founder Pete Celi is responsible for the sound design and DSP algorithm creation for all the Strymon pedals that use DSP. Recently I had the

One of the insanely great things about being part of the small Strymon team is writing and recording a lot of the music that appears

It has been a busy few months for Peter Dyer (keyboards), Joel Van Dijk (guitar), and Joe Gonzales (bass), who together make up The Grand

4 Responses

I’ve played guitar 50 years and was an engineer at HP. Nice to have this info

and a clear , concise walk down memory lane and the tech development.

I have a Blue Sky and a Mobius and think the rotary of the Mobius is fantastic.

Rather than re-creating the old circuit performance i like to create new and unusual effects.

I haven’t looked at capabilities of DIG but i really like “reverse” features.

thanks, steve

northern california

Thanks for the detailed article and description of the pedal and delay in general. I’m gonna be honest, you lost me after the first couple paragraphs. I would like to HEAR a comparison of HOW this different, new and improved delay mechanism sounds different or better than the former methods.

I appreciate the innovation, but to me- it’s like can I look at a photo and tell the difference between it being shot on a 35mm or an iPhone with a filter? No. Most people cannot either. So, i just really care about convenience and cost like most people.

I look forward to a comparison demo.

“Degradation, artifacts, [and] coloring” considered “shortcomings” of analogue technology? Really?! That’ll be why you see vintage Echoplex units rusting in skips.

@Ben – the degradation, artifacts, and coloring of those early tape and analog units were indeed often considered negatives through the 80s and 90s, until widespread nostalgia for those units arose.

When digital delays were introduced, they provided a cleaner, more reliable delay that was desired at the time. In the 80s there was a move away from those early technologies to get towards a ‘perfect’ replication of sound.

The interesting thing is, now there is nostalgia for these early digital technologies, because they have very unique and individual sounds as well! 🙂

Certainly Echoplex units won’t ever go away. But it is interesting to see how popularity of particular effects fades and comes back over time.